How to Integrate SEO and User Experience Pt 2: Content Strategy

5/28/2012

There are some very good how to articles and insights around building good content. However, thinking in terms of your customer is something that has not been logistically broken down well. Below is a step-by-step process as to how you should consider the customer when designing the content for your page.

Step #1: Evaluate Your Keywords

By now you should have a complete list of keywords that you want to rank for (if not then see below for how to get that). If you are writing content for a new webpage these words should be evaluated again to help your hypothesize what information your user is going to want to see when they type in the query. Now single words are a lot more difficult to judge than phrases. In this case you should think about what kind theme your website has and brainstorm two or three topics within the theme that could appeal to a wide audience. Then overtime you can experiment with the content to see which information will get your audience to convert more frequently.

When updating content on an existing page this step becomes a little easier. Google Analytics provides you with a list of keywords that your site is already ranking for. Even if you optimized the webpage to rank for certain keywords, you should still double check this list as it is likely a significant portion of your audience is coming to your site for phrases you were unaware of. Check and see what keywords account for 65% (or more) of your audience on that page. Then group these keywords and bucket them. You can then modify the content based on what you think the user is looking for in these buckets.

Step #2: Traits and Personality

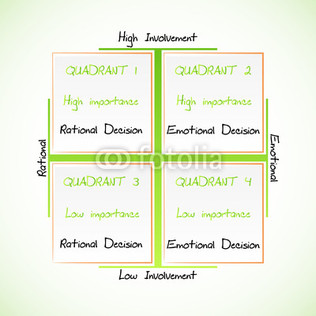

Take into consideration the personality traits of the consumer you want to attract and who is likely to convert on your website. Two great models that will help you figure this out are the FCB Grid and the VALS 2 Chart.

The Foote, Cone, & Belding (FCB) Grid can give you a visual as to the level of involvement a consumer will have in your product and their emotional appeal. For example, are your users going to be spending a lot of time researching information from multiple sources (high involvement)? If so you may want to consider adding external links to other relevant websites at the bottom of your webpage, just make sure they are not your competitors. Is the product you’re selling or information you are sending out more practical? Then the users you attract will fall under the “Think” category. Think category users typically want information that is factual and don’t really want to see language that you would hear in a car commercial.

Step #1: Evaluate Your Keywords

By now you should have a complete list of keywords that you want to rank for (if not then see below for how to get that). If you are writing content for a new webpage these words should be evaluated again to help your hypothesize what information your user is going to want to see when they type in the query. Now single words are a lot more difficult to judge than phrases. In this case you should think about what kind theme your website has and brainstorm two or three topics within the theme that could appeal to a wide audience. Then overtime you can experiment with the content to see which information will get your audience to convert more frequently.

When updating content on an existing page this step becomes a little easier. Google Analytics provides you with a list of keywords that your site is already ranking for. Even if you optimized the webpage to rank for certain keywords, you should still double check this list as it is likely a significant portion of your audience is coming to your site for phrases you were unaware of. Check and see what keywords account for 65% (or more) of your audience on that page. Then group these keywords and bucket them. You can then modify the content based on what you think the user is looking for in these buckets.

Step #2: Traits and Personality

Take into consideration the personality traits of the consumer you want to attract and who is likely to convert on your website. Two great models that will help you figure this out are the FCB Grid and the VALS 2 Chart.

The Foote, Cone, & Belding (FCB) Grid can give you a visual as to the level of involvement a consumer will have in your product and their emotional appeal. For example, are your users going to be spending a lot of time researching information from multiple sources (high involvement)? If so you may want to consider adding external links to other relevant websites at the bottom of your webpage, just make sure they are not your competitors. Is the product you’re selling or information you are sending out more practical? Then the users you attract will fall under the “Think” category. Think category users typically want information that is factual and don’t really want to see language that you would hear in a car commercial.

Once you place your consumer at the right point on the grid, you should look at other companies that fall into this category. Then research these other organizations on the web and look at the content they produce in order to convert their users. This can be a great method to brainstorm ideas for your own pages.

VALS II is a great tool to help you figure out the personality traits of your consumer. Make sure you read through each category in order to figure out which segment (or segments) is likely to be attracted to your sight and perform the desired conversion you would like. Then read up on as much information as you can find about these segments.

With these insights from the FCB Grid and VALS 2 chart, you can gain significant insights about your users and come up with topics on your webpage that will best appeal to them.

Step #3: The Decision Making Process

Now that you have evaluated what information your customer is looking for, consider what stage of the decision making process your customer will be in based on these words. Customers in the beginning stages of the process will need more information. The further down in the process your user/potential customer is, the less content will be needed. If your user is somewhere in between the evaluation and purchase phase (or even at the purchase phase) consider only mentioning the critical points needed to make that user convert.

VALS II is a great tool to help you figure out the personality traits of your consumer. Make sure you read through each category in order to figure out which segment (or segments) is likely to be attracted to your sight and perform the desired conversion you would like. Then read up on as much information as you can find about these segments.

With these insights from the FCB Grid and VALS 2 chart, you can gain significant insights about your users and come up with topics on your webpage that will best appeal to them.

Step #3: The Decision Making Process

Now that you have evaluated what information your customer is looking for, consider what stage of the decision making process your customer will be in based on these words. Customers in the beginning stages of the process will need more information. The further down in the process your user/potential customer is, the less content will be needed. If your user is somewhere in between the evaluation and purchase phase (or even at the purchase phase) consider only mentioning the critical points needed to make that user convert.

It is apparent from Google’s Penguin and Panda updates that

the days of creating content that is generic or auto-generated will get your website

off of the rankings. That is why it is important to have unique content that

delivers the information your users want and will help convert your audience. With

these steps you can significantly increase your odds of formulating a winning

content strategy and achieving those goals.

5/23/2012

Google's Penguin Update: The Good, The Bad, and The Ugly

Although this topic has been around for a few months (and maybe even talked to death), I feel like enough information has surfaced that I can write a comprehensive report on what the Penguin Update is all about. Penguin was released on April 24th, 2012 and is an algorithm update that affected roughly 3.1% of all English queries on Google. Some of the most interesting insights and theories about Penguin can be found here. Most of the information I see on the web is negative comments from users who have been downgraded on major keywords that they use to rank for. However, these comments should be taken with a grain of salt as you won’t hear too much from those who have been positively impacted by the update.

The Good

One thing that has come from Penguin is better relevancy overall. A list of the biggest winners and losers from Penguin has been generated from SearchMetrics. The more notable winners include Spotify, MensHealth, and Yellowbook. All of these sites have good content, create a nice user experience, and/or do a superb job at satisfying the needs of their users. They are also very well-known domain names. It is also shown that the big losers are database driven sites, have many pages with similar content, or contain auto-generated content.

Besides looking at the link profile, it is claimed that Google used keyword meaning to help determine the search results. This concept something I’ve been monitoring for a while. Although Google has been trying to get it’s algorithm to read into the meaning behind keywords for a while now, there has been no significant evidence that they have succeeded in this effort. A few months ago it was brought to my attention by an industry professional that they believe Google has begun to determine the intentions of the user when they type in a keyword. I believe that Penguin was the next step in continuing this initiative.

Another interesting detail that I learned from my research is that Google is devaluing sites that, for the most part, have money making keywords in the anchor text of links pointing to their site. Instead it was recommended that the majority of links in your link profile contain more natural anchor text (such as your domain name or the word click here).

The Bad

Although content and user experience is important, it's obvious that the websites link profile was a major factor in this update. From what I observed in forums and blogs, the major reason why people went up or down in the SERP was due to their link profile. As a result major brand name websites experienced the biggest boost, while smaller sites have appeared to suffer the most.

Websites that do not have the resources to get links on highly credible sites within their industry and rely heavily on one or two keywords appear to have taken the biggest hit. This is unfortunate because some of these sites satisfy their users need just as well as their big name competitors. Also if these sites have spammy links being pointed to them, their ability to counter these links with credible links or get Google to void those links is much more difficult compared to big name websites.

Another thing, which is still being debated, is whether or not Google is punishing sites with spammy links or just discounting them. It would seem more logical for Google to just void these links all together. This would still cause websites to lose keyword rankings; however, their white hat SEO efforts would still be maintained.

The Ugly

One thing that has been brought to light is negative SEO, in particular link spam. Many websites are complaining that their site has been penalized due to other companies blasting spammy links pointing to their site which took down their site on the SERP. It’s not 100% certain if these sites are going down from link spamming tactics or natural causes, but if negative SEO is happening then that creates a scary new weapon for immoral organizations on the internet.

All it would take is a program that could quickly blast same anchor text links on several free Wordpress sites and your website could be taken down. Now I plan on talking about this topic more in the future, but if this tactic really does work, then Google needs to come up with a system quickly that will negate link spamming.

Conclusion

In short the Panda Update is the next step by Google to force users to create good websites that satisfies the needs of the user and keep within the Google guidelines. There is no doubt that holes exist with this recent update, but Google is constantly updating their algorithm which is apparent with the 27,000 updates they had in 2011. If your site has been negatively affected by the update, then it is time to focus on tactics that will increase your rankings.

The Good

One thing that has come from Penguin is better relevancy overall. A list of the biggest winners and losers from Penguin has been generated from SearchMetrics. The more notable winners include Spotify, MensHealth, and Yellowbook. All of these sites have good content, create a nice user experience, and/or do a superb job at satisfying the needs of their users. They are also very well-known domain names. It is also shown that the big losers are database driven sites, have many pages with similar content, or contain auto-generated content.

Besides looking at the link profile, it is claimed that Google used keyword meaning to help determine the search results. This concept something I’ve been monitoring for a while. Although Google has been trying to get it’s algorithm to read into the meaning behind keywords for a while now, there has been no significant evidence that they have succeeded in this effort. A few months ago it was brought to my attention by an industry professional that they believe Google has begun to determine the intentions of the user when they type in a keyword. I believe that Penguin was the next step in continuing this initiative.

Another interesting detail that I learned from my research is that Google is devaluing sites that, for the most part, have money making keywords in the anchor text of links pointing to their site. Instead it was recommended that the majority of links in your link profile contain more natural anchor text (such as your domain name or the word click here).

The Bad

Although content and user experience is important, it's obvious that the websites link profile was a major factor in this update. From what I observed in forums and blogs, the major reason why people went up or down in the SERP was due to their link profile. As a result major brand name websites experienced the biggest boost, while smaller sites have appeared to suffer the most.

Websites that do not have the resources to get links on highly credible sites within their industry and rely heavily on one or two keywords appear to have taken the biggest hit. This is unfortunate because some of these sites satisfy their users need just as well as their big name competitors. Also if these sites have spammy links being pointed to them, their ability to counter these links with credible links or get Google to void those links is much more difficult compared to big name websites.

Another thing, which is still being debated, is whether or not Google is punishing sites with spammy links or just discounting them. It would seem more logical for Google to just void these links all together. This would still cause websites to lose keyword rankings; however, their white hat SEO efforts would still be maintained.

The Ugly

One thing that has been brought to light is negative SEO, in particular link spam. Many websites are complaining that their site has been penalized due to other companies blasting spammy links pointing to their site which took down their site on the SERP. It’s not 100% certain if these sites are going down from link spamming tactics or natural causes, but if negative SEO is happening then that creates a scary new weapon for immoral organizations on the internet.

All it would take is a program that could quickly blast same anchor text links on several free Wordpress sites and your website could be taken down. Now I plan on talking about this topic more in the future, but if this tactic really does work, then Google needs to come up with a system quickly that will negate link spamming.

Conclusion

In short the Panda Update is the next step by Google to force users to create good websites that satisfies the needs of the user and keep within the Google guidelines. There is no doubt that holes exist with this recent update, but Google is constantly updating their algorithm which is apparent with the 27,000 updates they had in 2011. If your site has been negatively affected by the update, then it is time to focus on tactics that will increase your rankings.

5/21/2012

How to Integrate SEO and User-Experience - Part 1 (Keyword Research)

When designing your website, the number one thing that should be on your mind is user-experience. Don't get me wrong, on-page SEO and link building are still very important things to have when designing your website. However, user-experience is the most important thing that should be on your mind and t his can run seamlessly through the SEO process. While looking through blog postings and news articles from major online marketing websites, I found that there is a sparse information about how to successfully integrate user-experience into the SEO process. This, to me, is a BIG MISTAKE considering how Google is continuously adjusting their algorithm in order to force webmasters to make a high quality website for users. So I decided to write a multi-part post about this subject.

Now let's take a look at keyword research. We all have our own unique process when it comes to generating a list of keywords to put on a webpage depending on what tools you have available. However, what should be considered from step one is the user. This includes picking and choosing keywords that will attract the right user to your site. Most people, when they are going through keyword research will pick the phrase (or word) in their list that has the highest volume of traffic. To them, the more visitors they can get to their site, the better chance they have at getting someone to convert. But this is the equivalent of buying 100 lottery tickets and hoping that one of them will win you millions of dollars.

Instead it is better to pick and choose keywords that have a good number of users querying for it and is typed by users who will perform the action that you want (whether that's buying a product, downloading a whitepaper, or commenting on a blog post). These words may only have a couple hundred users search for it, compared to the phrase that has a few thousand people search for it, but it's better to have one person convert on your page rather than a thousand people show up and leave.

For example, I have been working on a website that contains a section which gets hundred of visitors a month. However, the vast majority of these users, who come to the pages based on a major keyword phrase, leave as quickly as they showed up. These pages have increased the bounce rate of the website which has contributed to the website going down in rankings from Google. It was recently decided by my boss to get rid of these pages. So when doing the keyword research, make sure that your top phrases are words that will attract the right audience and lead to high conversion rates for your site.

Also it is important to pick keywords that closely fit with the topic of the webpage and that you can include effortlessly in your content. This includes avoiding keyword stuffing into the page. With the advancement of Google's technology I believe that it is just a matter of time before their AI systems can interpret the meaning of content and will be able to pick out words that are out of place in the page. If you can't right meaningful content based on your keyword, it's probably best just to leave it out.

(Part 2 coming soon)

Now let's take a look at keyword research. We all have our own unique process when it comes to generating a list of keywords to put on a webpage depending on what tools you have available. However, what should be considered from step one is the user. This includes picking and choosing keywords that will attract the right user to your site. Most people, when they are going through keyword research will pick the phrase (or word) in their list that has the highest volume of traffic. To them, the more visitors they can get to their site, the better chance they have at getting someone to convert. But this is the equivalent of buying 100 lottery tickets and hoping that one of them will win you millions of dollars.

Instead it is better to pick and choose keywords that have a good number of users querying for it and is typed by users who will perform the action that you want (whether that's buying a product, downloading a whitepaper, or commenting on a blog post). These words may only have a couple hundred users search for it, compared to the phrase that has a few thousand people search for it, but it's better to have one person convert on your page rather than a thousand people show up and leave.

For example, I have been working on a website that contains a section which gets hundred of visitors a month. However, the vast majority of these users, who come to the pages based on a major keyword phrase, leave as quickly as they showed up. These pages have increased the bounce rate of the website which has contributed to the website going down in rankings from Google. It was recently decided by my boss to get rid of these pages. So when doing the keyword research, make sure that your top phrases are words that will attract the right audience and lead to high conversion rates for your site.

Also it is important to pick keywords that closely fit with the topic of the webpage and that you can include effortlessly in your content. This includes avoiding keyword stuffing into the page. With the advancement of Google's technology I believe that it is just a matter of time before their AI systems can interpret the meaning of content and will be able to pick out words that are out of place in the page. If you can't right meaningful content based on your keyword, it's probably best just to leave it out.

(Part 2 coming soon)